social structure and the private sector

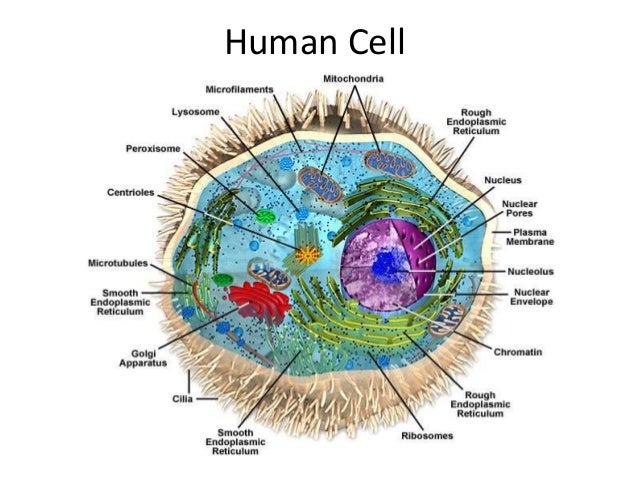

The Human Cell

Academic social scientists leaning towards the public intellectual end of the spectrum love to talk about social norms.

This is perhaps motivated by the fact that these intellectual figures are prominent in the public sphere. The public sphere is where these norms are supposed to solidify, and these intellectuals would like to emphasize their own importance.

I don’t exclude myself from this category of persons. A lot of my work has been about social norms and technology design (Benthall, 2014; Benthall, Gürses and Nissenbaum, 2017)

But I also work in the private sector, and it’s striking how differently things look from that perspective. It’s natural for academics who participate more in the public sphere than the private sector to be biased in their view of social structure. From the perspective of being able to accurately understand what’s going on, you have to think about both at once.

That’s challenging for a lot of reasons, one of which is that the private sector is a lot less transparent than the public sphere. In general the internals of actors in the private sector are not open to the scrutiny of commentariat onlookers. Information is one of the many resources traded in pairwise interactions; when it is divulged, it is divulged strategically, introducing bias. So it’s hard to get a general picture of the private sector, even though accounts for a much larger proportion of the social structure that’s available than the public sphere. In other words, public spheres are highly over-represented in analysis of social structure due to the available of public data about them. That is worrisome from an analytic perspective.

It’s well worth making the point that the public/private dichotomy is problematic. Contextual integrity theory (Nissenbaum, 2009) argues that modern society is differentiated among many distinct spheres, each bound by its own social norms. Nissenbaum actually has a quite different notion of norm formation from, say, Habermas. For Nissenbaum, norms evolve over social history, but may be implicit. Contrast this with Habermas’s view that norms are the result of communicative rationality, which is an explicit and linguistically mediated process. The public sphere is a big deal for Habermas. Nissenbaum, a scholar of privacy, reject’s the idea of the ‘public sphere’ simpliciter. Rather, social spheres self-regulate and privacy, which she defines as appropriate information flow, is maintained when information flows according to these multiple self-regulatory regimes.

I believe Nissenbaum is correct on this point of societal differentiation and norm formation. This nuanced understanding of privacy as the differentiated management of information flow challenges any simplistic notion of the public sphere. Does it challenge a simplistic notion of the private sector?

Naturally, the private sector doesn’t exist in a vacuum. In the modern economy, companies are accountable to the law, especially contract law. They have to pay their taxes. They have to deal with public relations and are regulated as to how they manage information flows internally. Employees can sue their employers, etc. So just as the ‘public sphere’ doesn’t permit a total free-for-all of information flow (some kinds of information flow in public are against social norms!), so too does the ‘private sector’ not involve complete secrecy from the public.

As a hypothesis, we can posit that what makes the private sector different is that the relevant social structures are less open in their relations with each other than they are in the public sphere. We can imagine an autonomous social entity like a biological cell. Internally it may have a lot of interesting structure and organelles. Its membrane prevents this complexity leaking out into the aether, or plasma, or whatever it is that human cells float around in. Indeed, this membrane is necessary for the proper functioning of the organelles, which in turn allows the cell to interact properly with other cells to form a larger organism. Echoes of Francisco Varela.

It’s interesting that this may actually be a quantifiable difference. One way of modeling the difference between the internal and external-facing complexity of an entity is using information theory. The more complex internal state of the entity has higher entropy than the membrane. The fact that the membrane causally mediates interactions between the internals and the environment prevents information flow between them; this is captured by the Data Processing Inequality. The lack of information flow between the system internals and externals is quantified as lower mutual information between the two domains. At zero mutual information, the two domains are statistically independent of each other.

I haven’t worked out all the implications of this.

References

Benthall, Sebastian. (2015) Designing Networked Publics for Communicative Action. Jenny Davis & Nathan Jurgenson (eds.) Theorizing the Web 2014 [Special Issue]. Interface 1.1. (link)

Sebastian Benthall, Seda Gürses and Helen Nissenbaum (2017), “Contextual Integrity through the Lens of Computer Science”, Foundations and Trends® in Privacy and Security: Vol. 2: No. 1, pp 1-69. http://dx.doi.org/10.1561/3300000016

Nissenbaum, H. (2009). Privacy in context: Technology, policy, and the integrity of social life. Stanford University Press.